Icebergs

of information loiter throughout process manufacturing IT waiting to sink any

information integration project. The impact of semantic technologies is being

felt in medicine, life sciences, intelligence, and elsewhere but can it solve

this problem in process manufacturing? The ability to federate information from

multiple data-sources into a schema-less structure, and then deliver that

federated information in any format and in accordance with any standard schema

uniquely positions semantic technology. Is this a sweet spot for semantic

technologies?

Process Manufacturing Application Focus over

the Years

Over the years we have been solving problems within process

manufacturing IT only to uncover more problems. Once the problem was that of

measurement data in silos which was solved by the introduction of real-time data

historians. However that created the problems of data visibility, solved by the

introduction of graphical user interfaces. This introduced data overload which

was partially solved by the introduction of analytical tools to digest the

information and produce diagnostics. Unfortunately these tools were difficult

to deploy across all assets within an organization, so we have been trying to

solve that problem with information models. The current problem is how to

convert the diagnostics into actionable knowledge with the use of work-flow

engines and ensuring the sustainability of applications as solutions increases

in complexity.

Process Manufacturing Application Problems and

Solutions over the Years

|

|

1985-

1995

|

1990-

2000

|

1995-

2005

|

2000-

2010

|

2005-

2015

|

2010-

|

Problem

|

Measurement

data in silos

|

Data

access and visualization

|

Analysis

and business intelligence

|

Contextualized

information

|

Consistent

actioning

|

Sustainability

|

Industry

Response

|

Real-time databases collecting measurements

(proprietary)

|

Graphical user interfaces, trending and reporting tools

(proprietary)

|

Analytical tools to digest data into information and

diagnostics

|

Plant data models (ProdML, ISA-95, ISO15926, IEC

61970/61968, Proprietary)

|

ISO-9001

|

Outsourcing

Standards

|

Consequence

|

Data but no user access

|

Data overload

|

Deployability of analysis to all assets

|

Interpretation limited to experts

|

Complexity, much more than RTDB, limiting sustainability

|

Improved ongoing application benefits

|

However it is not only the increased technological complexity that is causing

problems. Business decisions now cross many more business boundaries. When

measurement data was trapped in silos we were content with unit-wide or

plant-wide data historians. Now a well performance problem might involve a

maintenance engineer located in Houston accessing a Mimosa-based

maintenance management system, an operations engineer located in Aberdeen

accessing an OPC-UA-based

data historian, a production engineer located in London accessing a custom

system driven by WITSML-based

feeds, and a facilities engineer using an ISO-15926

facilities management model. Not only are the participants in different

locations and business units, but they also rely on different systems using

different models to support their decision making. However they all should be

talking about the same well, measured by the same instruments, producing the

same flows, and processed by the same equipment.

The problem is that these operational support systems are not simply data

silos whose homogeneous data we need to merge into one to answer our questions.

In fact these operational support systems are icebergs of information. Above

the surface they publish a public perspective focused on the core operational function

of the application. However this data needs context, so below the surface is much

of the same information that is contained in other systems. This information

provides the context to the operational data so that the operational system can

perform its required functions. For example the historian needs to know

something about the instruments that are the source of its measurements;

maintenance management systems need to know not only about the equipment to be

maintained but the location of that equipment, physically and organizationally.

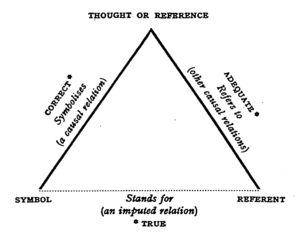

Figure 1: Icebergs of Information

Icebergs of information are not limited to the operational data stores

deployed in organizations. An essential practice in these days of

interoperability requirements is the adoption of model standards. However even

these exhibit the same problems as shown by the diagram below. This diagram

maps the available standards to its focus within the hydrocarbon supply chain.

Figure 2: Multiple Overlapping Model Standards

Increasing regulatory and competitive demands on the business are

forcing decision making to be more timely, and to be more integrated across the

traditional business boundaries. However these icebergs are getting in the way

of effective decision making.

One way to make any or all of this information available to consumers is

to create the bigger iceberg. ‘Simply’ create the relational database schema

that covers every past, current, and future business need, and build adapters

to populate this database from the operational data stores. Unfortunately this

mega-store can only get more complex as it has to keep up with an expanding

scope of information required to support the decision making processes.

Figure 3: Integration using the Bigger Iceberg

Alternatively we can keep building data-marts every time someone has a

different business query. However these

do not provide the timeliness required to support operational decision making.

We cannot meet the needs of the business, and solve their decision

making needs by having one mega-store because it will never keep up with the

changing business requirements. Instead we need a babel-fish (with thanks to

the Hitchhikers Guide to the Galaxy).

This babel-fish can consume all of the different operational data in

different standards, and translate them into any standard that the

end-consumer wants. Thus the babel-fish will need to know that OPC UA's concept

'hasInstrument' has the same meaning as Mimosa's concept of

'Instrumented'. Similarly 10FIC107 from an OPCUA provider is the same as

10-FIC-1-7 from Mimosa.

1. Information providers

(operational data stores) within the business will want to provide information

according to their capabilities, but preferably using the standards appropriate

for their application. For example measurements should be OPC

UA, maintenance should use Mimosa

2. Information consumers will

want to consume information in the form of one or more standards appropriate

for their application.

Figure 4: Integration babel-fish

First of all a definition: a semantic model means organizing all data

and knowledge as RDF triples {subject, property, object}. Thus {:Peter, :hasAge,

21^^:years}, and {:Pump101, :manufacturedBy, :Rotek} are examples of RDF

triples. RDF triples can be persisted in a variety of ways: SQL table, custom

organizations, NoSQL, XML files and many more. If we were designing relational

database to hold these RDF triples we would only have one ‘table’ so it may

appear that we have no schema, in the relational database design-sense when we

have key relationships to enforce integrity, and unique indices to enforce

uniqueness. However we can add other statements about the data such as {:Pump101,

:type, :ReciprocatingPump} and {:ReciprocatingPump, :subClassOf, :Pump}[5].

Used in combination with a reasoner we can infer consequences from these

asserted facts, such as :Pump101 is a type of :Pump, and Peter is not a :Pump,

despite rumors to the contrary. These triples can be visualized as the links in

a graph with the subject and object being the nodes of the graph, and the

property the name of the edge linking these nodes:

Figure 5: RDF Triples as a graph

Over the years, new modeling metaphors have been introduced to solve

perceived or actual problems with their predecessors. For example the

Relational Model had perceived difficulties associated with reporting, model

complexity, flexibility, and data distribution. A semantic model helps solve

these problems.

Figure 6: Evolution of Model Metaphors

·

In response to the perceived reporting issues, OLAP techniques were introduced

along with the data warehouse. This greatly eased the problem of

user-reporting, and data mining. However it did introduce the problem of data

duplication.

o A

semantic model can query against a federated model in which information is

distributed throughout the original data sources.

·

In response to the perceived complexity issues, various forms of

object-orientated modeling were introduced. There is no doubt that it is easier

to think of one’s problem in terms of an object model rather than a complex

relational or ER model, especially when there are a large number of entities

and relations.

o The

semantic model is built around the very simple concept of statements of facts

such as {:Peter, :hasAge, 21^^:years}, and {:Pump101, :manufacturedBy, :Rotek}

combined with statements that describe the model such as {:Pump101, :type, :ReciprocatingPump}

and {:ReciprocatingPump, :subClassOf, :Pump}.

·

The model flexibility problem occurs when, after the model has been designed,

the business needs the model to change. In response to this flexibility issue,

the choice is to make the original model anticipate all potential uses but then

risk complexity, or use an object-relational approach in which it is possible

to add new attributes without changing the underlying storage schema.

o In

semantic models these relationships are expressed in triples, using RDFS, SKOS,

OWL, etc. Thus RDF is also used as the physical model (in RDF stores, at

least).

·

There have been various responses to data distribution.

o In the

relational world there is not much choice other than to replicate the data from

heterogeneous data stores using Extract-Transform-Load (ETL) techniques. In the

case of homogenous but distributed databases distributed queries are possible,

although it does require intimate knowledge of all the schemas in all of the

distributed databases.

o In the

object-orientated world we are in a worse situation: it is very difficult to

manage a distributed object in which different objects are distributed or

attributes are distributed.

The good news is that a semantic approach is the ideal (or even the only)

approach that can solve the information integration problem as follows:

1. Convert to RDF normal form: Convert all source data into RDF. The data

can be left at source and fetched on demand (federated) or moved into temporary

RDF storage

- There are

already standard ways of doing this for any spreadsheet, relational

database, XML schema, and more. For example, TopBraid Suite (http://www.topquadrant.com/products/TB_Suite.html) provides

converters and adaptors for all common data sources. It

is relatively easy to create more mappings such as OPCUA. The

dynamic adapters act as SPARQLEndpoints.

2. Federated data model: Create 'rules' that map one vocabulary to another.

- The language of

these rules would be RDFS, SKOS and OWL. For example you

can declare {OPCUA:hasInstrument, owl:sameAs,

Mimosa:Instrumented}. Note that these are simply additional statements expressed

in RDF which are then used by a reasoner to infer the consequences such

as :FI101 is actually the same as :10FIC101.

- More sophisticated

rules can also be created using directly RDF and SPARQL. For some

examples, see SPIN or SPARQL Rules at http://spinrdf.org/ and http://www.w3.org/Submission/2011/SUBM-spin-overview-20110222/

3. Chameleon data services: Create consumer queries that

extract the information from the combined model into the standard required

using SPARQL queries.

- For example even

though all instrument data is in OPCUA, a consumer could use a Mimosa interface

to fetch this data. The results can then be published as

web-services for consumption by external applications using SPARQLMotion

(http://www.topquadrant.com/products/SPARQLMotion.html)

Figure 7: Federation End-to-End

Despite the fact that data will be stored in different formats

(relational, XML, object, Excel, etc) according to different schemas they can

always be converted into RDF triples. Always is a strong word, but it really

does work. There are already ways of doing this for any spreadsheet, relational

database, XML schema, and more and it is relatively easy to create

more mappings such as OPC-UA. The data can be left at source and fetched on

demand (federated) or moved into temporary RDF storage. For example, TopBraid

Suite (http://www.topquadrant.com/products/TB_Suite.html) provides converters and adaptors for all common data sources.

Figure 8: Conversion to RDF Normal Form

A federated data model allows different graphs (aka databases) to be

aggregated by linking the shared objects. This applies to real-time

measurements (OPC-UA), maintenance (MIMOSA), production data (ProdML), or any

external database. We can visualize this as combining the graphs of the

individual operational data stores into a single graph.

Of

course there will be vocabulary differences between the different data-sources.

For example, in the OPC-UA data-source you might have a property OPCUA:hasInstrument,

and in a MIMOSA data-source the equivalent is called Mimosa:Instrumented. So

the federated data model incorporates 'rules' that map one vocabulary to

another. The language of these rules would be RDFS, SKOS, and OWL.

For example, in OWL, you can declare {OPCUA:hasInstrument owl:sameAs

Mimosa:Instrumented}. Note that these are simply additional statements

expressed as RDF triples which are then used by a reasoner to infer

consequences such as :FI101 is actually the same as :10FIC101.

There

will also be identity differences between the different data-sources. These can

also be handled by additional statements, such as {:TANK#102, owl:sameAs,

:TK102 }. This allows a reasoner to infer that the statement {:TK102,

:has_price, 83^^:$} also applies to :TANK#102, implying {:TANK#102, :has_price,

83^^:$}.

Figure 9: Information Federated from MulTiple

Datasources

To extract information from the federated information, the best choice

is SPARQL, the semantic equivalent of SQL only simpler. Whilst SQL allows one

to query the contents of multiple tables within a database, SPARQL matches

patterns within the graph. With SQL we need to know in which table each field

belongs. With SPARQL we define the graph pattern that we want to match, and the

query engine will search throughout the federated graphs to find the matches.

In the example illustrated below we do not need to know that the price

attribute comes from one data source, whilst the volume comes from another. In

fact SPARQL allows even further flexibility. The price attribute for Tank#101

could come from a different data source than the price attribute for Tank#102.

This is part of the magic of the semantic technology.

Figure 10: Graph Pattern matching with SPARQL

SPARQL can be used to directly query the federated graph for reporting

purposes, however most consumers of the information will expect to interface to

a web-service, with SOAP or REST being the most popular. These services do not

have to be programmed. Instead they can be declared using SPARQLMotion

(http:www.sparqlmotion.org) to produce easily consumed and adaptable

web-services. The designer for SPARQLMotion is shown below:

Figure 11: Example SPARQLMotion

Despite solving a complex

data integration problem, Semantic/RDF is inherently simpler. Can there be

anything simpler than storing all knowledge as RDF triples? Despite this simplicity, we do not lose any

expressivity.

There is no predefined schema

to limit flexibility. However the schema rules, encoded as tables and keys in

the relational model, can still be expressed using RDFS, OWL, and SKOS

statements.

Deconstructing all

information into statements (triples) allows data from distributed sources to

be easily merged into a single graph.

Any information model can be

reconstructed from the merged graph using SPARQL and presented as web-services

(SOAP or REST).

WITSML™ (Wellsite Information Transfer

Standard Markup Language) is an industry initiative to provide open,

non-proprietary, standard interfaces for technology and software that monitor

and manage wells, completions and workovers.

ISO 15926 provides integration of life-cycle

data for process plants including oil and gas production facilities